Before you get started

A/B testing is one of those subjects where it’s easy to get drawn in and spend forever constructing the perfect test. However, before you go back to uni to study data science, it’s also important to remember the whole point of the A/B test in the first place. In our case, that’s optimizing our outbound campaigns.

It’s important to start with a solid understanding of sales sequences. What kind of approaches typically work for companies like yours? While it’s no excuse for not testing, a good foundation will give you the best start to your campaign, along with an understanding of the different components to a successful campaign that you might want to test. If you aren’t familiar with outbound outreach, read some blog posts from industry leaders, as well as books on sales development to get a high-level understanding of how to create high-performing outbound sequences.

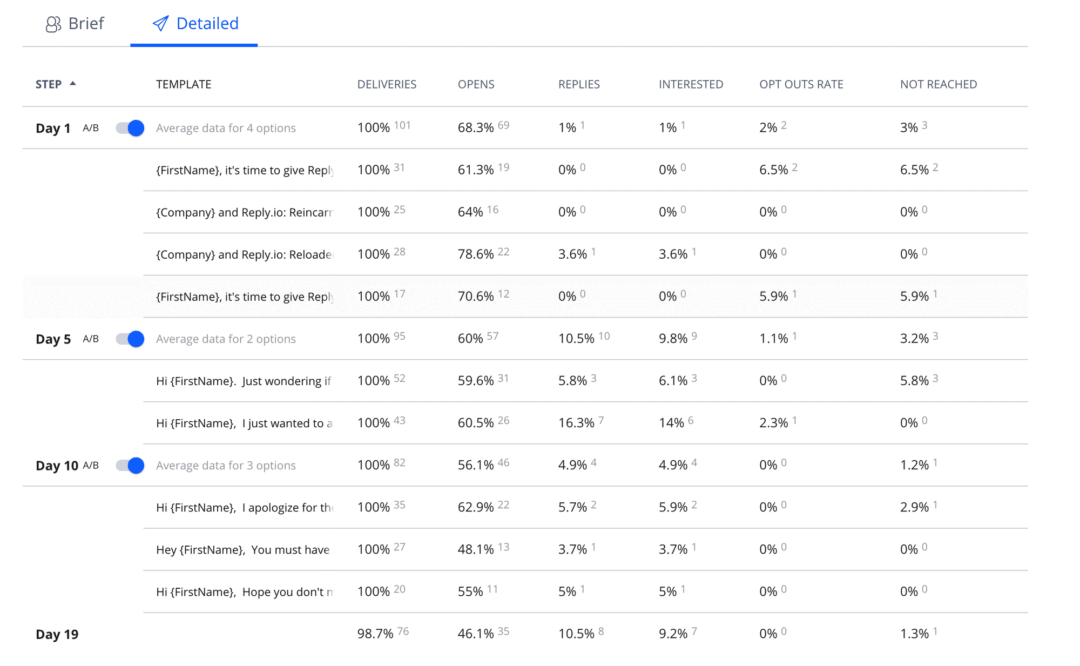

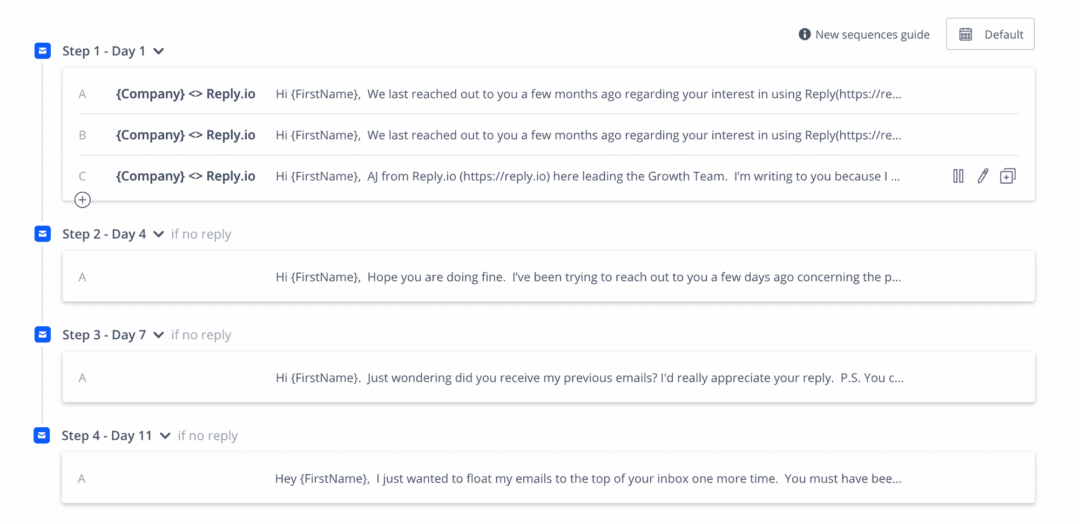

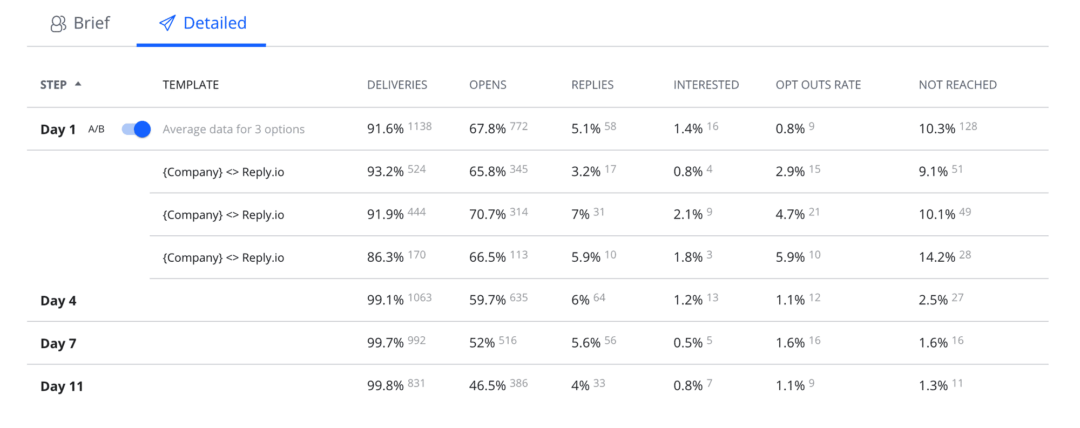

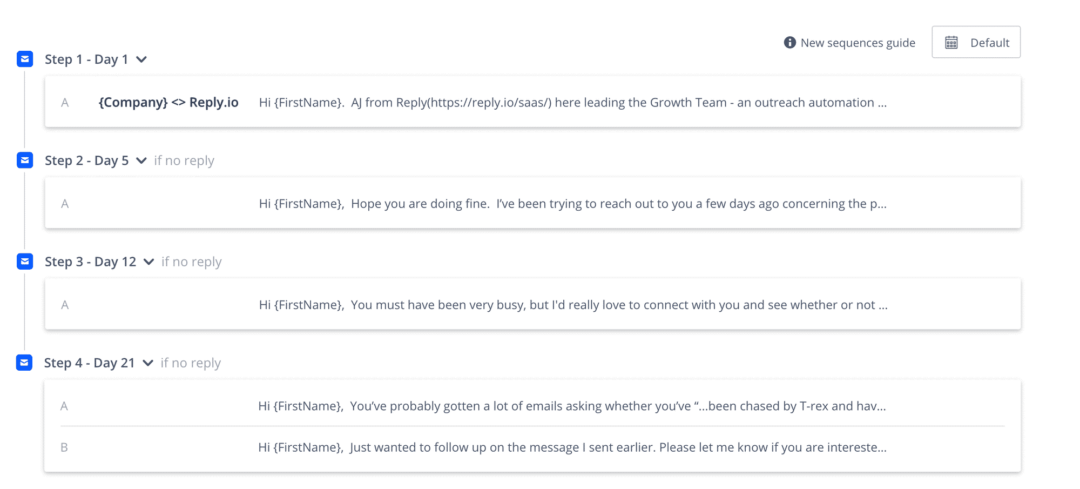

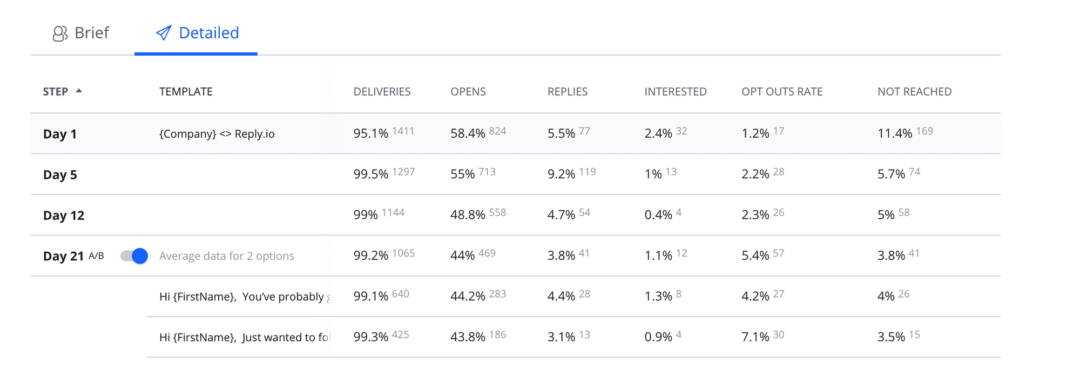

If you feel like you have that high-level understanding, but don’t feel like an expert, that’s okay. There is no need to understand everything from A to Z before you get started. The best idea is to start your sequence as soon as possible. That way you’ll be able to start collecting more info about open rates, reply rates, interested rates, who responds better, etc. Having that data is crucial for success.

A/B testing, step by step

Hopefully, the idea of A/B testing doesn’t seem too daunting now. By breaking it down into the following individual steps, you can quickly get started.

Decide on your goal

As we’ve mentioned, it’s not enough to go into your A/B test with nothing more than curiosity. You need a clearly specified goal, otherwise, it’s all too easy to get caught up in the different metrics available. So for an outbound email campaign, you might want to start with positive reply rate, meetings booked, or a similar outcome-based metric

Pick a variable to test

With your desired outcome in mind, it’s time to choose what you’re going to test. It’s usually best to pick just one variable to change (multivariate testing is possible, but generally best done when you’ve already experimented with changing single variables and are ready for more complex tests). At this point I recommend coming up with a hypothesis, connecting your variable and goal to help target your testing (e.g. “I predict that this variable change will encourage (reaction) and cause (result)”).

Here are some examples of variables you might want to consider testing.

- Subject lines – Subject lines have a lot of work to do in a short amount of space. If they aren’t good enough, your prospect may never even see your email. Does a question outperform a statement? Do emojis hurt or help your open rate? Should you personalize with the recipient’s name or their company’s name?

- Calls to action – It’s generally accepted that you shouldn’t go for the hard sell in the first email (although feel free to test that). If that’s true, what should you ask people to do after reading your email? Booking a meeting might be your campaign objective, but maybe aiming for a non-committal response and starting a conversation in your first email is more effective. Think about the next steps your prospects could take, then test them out.

- Images – Another accepted practice is sending your emails in plain text, as that’s more in line with how people normally email each other (and is less likely to be picked up by an over-zealous spam filter). However, depending on your product or service, you still might want to consider sending images. We’ve been excited about our recent Vidyard integration, so you might want to test the response to videos in your email. Even if you don’t want to send any other images, many find including a picture in their signature can help increase reply rates. Will it work for you?

- Body text – Of course, it’s important to think about the body of the email itself. How are you opening your email? Are you highlighting the pain points your prospect typically faces or promoting the value your solution offers? Are you going to use humor or do you want to present yourself as completely professional? Bonus point: Your email’s first lines are critical, as they’re often seen as a preview in the email client, so they are a good place to start your testing.

- Sender name – People often focus on the subject line as the key variable affecting open rates, but the sender name can be just as important. ‘no-reply@company.com’ is a terrible sender name, and not worth testing. However, that still leaves several options. Is it better to use your personal name or your company name? What about using both?

- Time and Day of Send – One of the most popular questions I’ve had is ‘what’s the best time to send an email?’ A search online will bring up as many possibilities as you can imagine, for every conceivable combination of day and time. At Reply, we’ve seen the most success sending emails on a Tuesday or Wednesday. However, your results will most likely vary. Test it for yourself.

Note: While we’ve focused on emails for this post, outbound campaigns can also include other methods such as cold calls and engagement over social media. While this may take a bit more thought, it’s entirely possible to use the same principles to test these methods. For example, you could A/B test the opening lines of your cold call script (is it better to start with an introduction, a question, or a pleasantry?) or you could test variations of your LinkedIn connection request.

Remember to keep the test as controlled as possible and consider the additional variables these methods have; a cold call by Happy Harry may always outperform one by Blunt Barry, no matter how they start the call, and a connection request from an active LinkedIn account that’s previously engaged with the prospect may always outperform one by an inactive account, regardless of the connection request.

![Upselling and Cross-selling: The Go-To Guide [+7-Step Framework Inside] Upselling and Cross-selling: The Go-To Guide [+7-Step Framework Inside]](https://reply.io/wp-content/uploads/upsale-1024x538.jpg)